Actionable Intelligence

The main requirement for machine vision systems in food automation is extracting reliable information in challenging environments.

The image data from these systems then need to flow into larger systems that inspect, classify, guide, and act. Across soil, processing, packaging, and storage, the applications may look very different, but the underlying imaging problems often have more in common than they first appear. Solving them is not only about image quality, but also about camera features that may be overlooked during initial evaluation and later become essential to reliable system integration and operation.

Different Applications, Similar Challenges

Food automation spans a wide range of environments and tasks. A system may need to detect rocks in a dusty field, classify fish during handling, locate irregular organic surfaces for robotic cleaning, inspect wrapped products through glare, or guide autonomous forklifts around reflective warehouse infrastructure. At first glance, these applications seem unrelated. But when broken down into their core vision requirements, the same fundamental imaging challenges often repeat.

Biological & environmental variability

Nature is not uniform. Food products, organic surfaces, outdoor environments, and moving targets vary widely in size, shape, moisture, position, and appearance.

Real-time decision making

In many systems, detection alone is not enough. The image data must be processed quickly enough to trigger actuation within the timing constraints of the process.

Optical ambiguity

Sometimes the signal needed for inspection is weak, hidden, or absent in visible light. Glare, transparent materials, wet surfaces, and subsurface defects can make standard RGB imaging insufficient.

These three challenges do not map one-to-one to specific applications. A single application may involve more than one of them at the same time. And that is why the same camera technologies often appear across very different systems. A rugged 2D camera, a compact embedded camera, a 3D Time-of-Flight camera, a SWIR camera, or a polarization camera take a leading role in a surprising variety of use cases when the underlying imaging challenge is similar.

Beyond the Sensor:

Important Camera Features in Food Automation

Image quality and sensor performance matter, but are not the only considerations when selecting a camera for food automation. Real systems may also depend on environmental protection, compact size, synchronization, bandwidth, triggering, and 3rd party software or hardware compatibility. In some applications, overlooked features such as rugged M12 screw-lock cable connectors or an extended imager head can matter more than megapixels and frame rates alone.

Rugged design for stable operation on industrial equipment

- Shock and vibration certification

- Secure mounting holes

- EN 60068-2-27, EN 60068-2-64, EN 60068-2-6

Environmental protection for harsh environments

- IP67 dust and water protection

- Wide operating temperatures

- Sealed connectors

- Industrial EMC immunity

Compact form factor for tight mechanical spaces and embedded systems

- 24x24mm size, ~30g

- 90° & 180° form factor

- Extended head and different port options

Timing and sync to coordinate with robots, conveyors, and other devices

- Counters and timers

- Chunk data, event data

- Time stamps

I/O and trigger for deterministic image capture and machine control

- GPIO

- Opto-isolated & non-isolated I/O

- PTP (IEEE 1588)

- Hardware & software trigger

Interface & bandwidth for reliable streaming

- Ethernet-based cameras

- 1GigE, 2.5GigE, 5GigE

- 10GigE, 25GigE with RDMA

System integration and compatibility

- Arena SDK, APIs, code examples

- 3rd party software compatibility

- GigE Vision, industry standards

With that broader view of camera selection in mind, the next sections look at how these recurring imaging and integration challenges appear across each stage of food automation. We begin with soil, where outdoor variability, motion, and rugged operating conditions make reliable detection especially demanding.

From Farm to Forklift: Soil, Processing, Packaging, Storage

Soil:

Detecting Targets in Harsh, Uncontrolled Environments

At the soil stage, machine vision systems operate in some of the least controlled conditions in the entire workflow. Dust, vibration, changing lighting, moving machinery, debris, and irregular terrain all make it difficult to capture usable image data. The challenge is not simply to “see” an object, but to detect it reliably while the environment itself is constantly changing.

Outdoor agricultural and field-based systems rarely operate in stable lighting or have fixed object presentation. Targets may be partially hidden, irregular in shape, or surrounded by other visually confusing elements. At the same time, the machine carrying the camera is often in motion, so imaging, processing, and action must happen together.

Key Insight: In harsh outdoor environments, camera ruggedness, motion stability, and real-time response matter just as much as image quality.

Primary challenges

Biological & environmental variability

Real-time decision making

Rock Picking

Rock picking systems must detect foreign objects under motion while operating in dust, vibration, and uncontrolled lighting. This is a strong example of how rugged 2D imaging can solve a difficult real-world problem when motion clarity and reliable triggering matter more than lab-perfect conditions.

Camera used in system: Triton IP67 camera.

A rugged industrial 2D camera with a global shutter can capture fast-moving scenes clearly, while IP67 protection and shock and vibration certified hardware support outdoor deployment in rough terrain.

Crop Spraying

Precision crop spraying systems must distinguish crops from weeds inside tightly constrained mechanical spaces, then trigger localized spray decisions in real time. Here, the biggest difficulty is not only image analysis, but embedding the vision system into existing agricultural machinery.

Camera used in system: Phoenix modular camera.

A very small, lightweight camera platform is often more important than raw sensor size. Form factor, orientation options, and flexible integration become critical to whether the system can be deployed at all.

Processing:

Turning Biological Complexity into Actionable Data

Automation in food processing requires multiple devices to synchronize and act deterministically. While this stage moves environments, many of the same challenges from soil continue in a different form, including moisture, dirt, steam, washdown conditions, and other sources of environmental variability. Applications must process organic targets with variable surfaces and meet tight tolerances, while also needing to classify, localize, measure, inspect, or guide an action precisely in 2D or 3D. This is where food automation becomes especially complex.

This stage is also where different sensing modalities begin to show their value more clearly. Some tasks rely on compact 2D classification. Others require 3D point clouds or imaging beyond visible light.

Key Insight: In food processing, reliable automation depends on matching the sensing modality to the task, whether that means 2D classification, 3D guidance, or imaging beyond visible light.

Primary challenges

Biological & environmental variability

Real-time decision making

Optical ambiguity

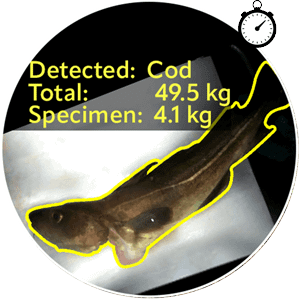

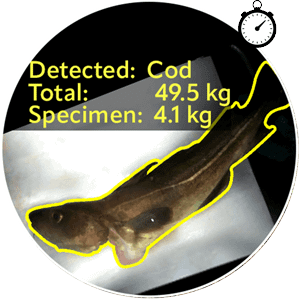

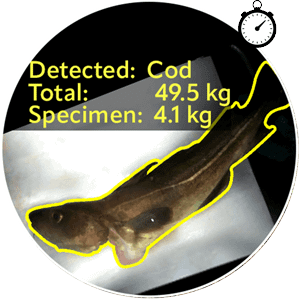

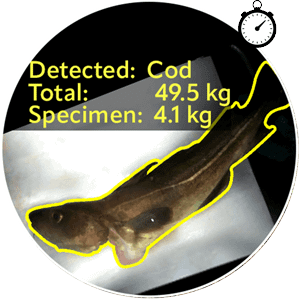

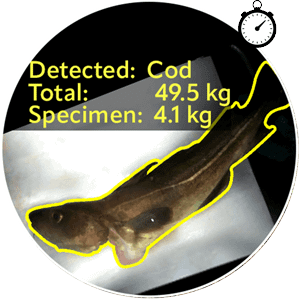

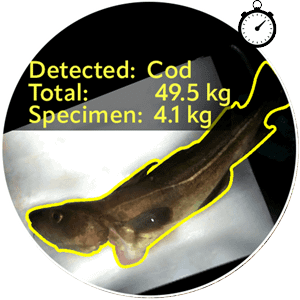

Catch Registration

When fish are being collected and handled continuously, the vision system must classify species, estimate size or biomass, and track objects under variable motion and lighting. This is a strong example of how biological variability and real-time decision making can come together in one system.

Camera used in system: Phoenix modular camera.

A compact camera can be integrated directly at the point of collection within a system enclosure, where space may be limited and handling conditions may be unpredictable.

Cow Cleaning

In many automated food applications, robots needs to interact with a living animal or another non-rigid biological surface. In these situations 3D geometry becomes critical. The challenge is not just to see the target, but to extract stable point cloud data under wet, reflective, and moving conditions.

Camera used in system: Helios2+ 3D ToF IP67 camera.

LUCID’s 3D Time-of-Flight camera can provide the depth data needed for robotic guidance, while HDR and industrial protection (IP67) help maintain reliability in difficult operating conditions.

Food Safety

In food safety inspection, contamination may appear on irregular carcass surfaces under wet and reflective conditions, often with limited inspection time. The challenge is not only to detect the contamination, but to map it precisely enough for downstream removal or treatment.

Recommended camera: Helios2 Chroma RGB-D IP67 camera.

An RGB-D camera system combines color and depth, helping to connect appearance-based detection to spatial action, reducing integration complexity compared to building separate 2D and 3D systems from scratch.

Material Insight

Some processing challenges are not about geometry at all. Bruising, moisture variation, and subtle material differences may be physically present but difficult or impossible to distinguish in standard visible imaging. When visible light is not enough, engineers must look to other wavelengths or properties of light to find the needed contrast.

Recommended cameras: Atlas SWIR and Triton SWIR IP67 cameras.

SWIR imaging expands the usable wavelength range beyond visible light, allowing the system to capture information about moisture content, bruising, and material properties that standard RGB cameras may miss.

Packaging:

Reliable Inspection for Reflective Packaging and Variable Orientations

By the time products reach packaging, many of the challenges have shifted. The target may now be more controlled in shape and position, but packaging materials themselves often create new optical problems. Plastic films, shrink wrap, glossy labels, sealed trays, and transparent surfaces can all interfere with inspection by creating glare or hiding important details. At the same time, the system must maintain speed and repeatability while running continuously with variations in product orientation.

Key Insight: In packaging automation, finding enough contrast to detect defects or determine object orientation is only part of the challenge. A camera’s timing, synchronization, and integration with the larger machine often matter just as much.

Primary challenges

Real-time decision making

Optical ambiguity

Wrapper Inspection

In wrapper inspection, the signal may already be there, but glare from plastic films or glossy surfaces makes it difficult to recover. Standard imaging can struggle because specular reflections obscure the details needed for inspection.

Camera used in system: Triton Polarization and Phoenix Polarization camera.

Polarization imaging can separate specular and diffuse reflection, making it easier to recover surface detail and inspect through reflective packaging conditions along with identifying defects in the wrapper itself.

Blister Packaging

In blister packaging and pick-and-place systems, the task is often to identify packaged items, determine their position and orientation, and send coordinates to a robot or other downstream process. Even when the products are more regular, the system still has to handle reflective materials, cluttered layouts, and operate continuously 24/7.

Camera used in system: Triton IP67 camera.

An industrial 2D camera with synchronization features can support repeatable detection, timing, and robotic coordination. In these applications, features like hardware triggering, PTP, time stamps, action commands and 3rd party software compatibility can be as important as the sensor itself.

Storage & Logistics:

Real-Time Perception for Safe Material Movement

In storage and logistics, the challenge becomes less about inspecting a product and more about understanding an environment in motion. Autonomous forklifts, pallet handling systems, and other logistics platforms must continuously perceive the space around them, detect objects and structures, and make safe navigation or positioning decisions in real time.

This is where machine vision becomes part of a more autonomous system. Storage and logistics bring together many of the same challenges seen earlier in the workflow: environmental variability, limited contrast on reflective surfaces, and the need for real-time action. But here, the image data feeds a continuous perception system rather than a single inspection event.

Key Insight: In autonomous logistics, the goal is not just to capture 3D data, but to provide dependable spatial information continuously enough for safe navigation and positioning.

Primary challenges

Biological & environmental variability

Real-time decision making

Optical ambiguity

Autonomous Forklifts

Autonomous forklift systems must navigate dynamic warehouse environments, detect rack positions, estimate distances, and operate safely around reflective industrial surfaces and moving obstacles. Even when the workflow is structured, the environment is rarely static.

Camera used in system: Helios2+ 3D ToF IP67 camera.

LUCID’s 3D Time-of-Flight camera can provide the depth data needed for robotic guidance, while HDR and industrial protection (IP67) help maintain reliability in difficult operating conditions.

Different Applications. Shared Imaging Challenges.

Across soil, processing, packaging, and storage, food automation systems may look very different, but the same core imaging challenges often repeat. Biological and environmental variability, real-time decision making, and optical ambiguity appear in different combinations throughout the workflow, which is why the same camera technologies can support very different applications. A successful application depends on more than sensing modality alone. Rugged design, synchronization, triggering, bandwidth, software support, and system integration often play just as important a role in reliable deployment.

The goal is not simply to capture images, but to provide dependable data that larger systems can turn into action.

Sensing Modality Map

Biological & Environmental Variability

Outdoor conditions, moisture, motion, irregular geometry, and changing target size, shape, and position.

Rugged 2D / 3D, Compact Embedded

Use rugged housings, compact form factors, or depth sensing when the scene is physically variable and difficult to control.

Real-Time Decision Making

Detection must feed immediate action for robots, conveyors, sprayers, or autonomous systems.

Sync, Trigger, Bandwidth, 2D/3D

When image data must drive action right away, camera timing, trigger control, and reliable streaming become central.

Optical Ambiguity

The needed contrast may be hidden by glare, transparency, wet surfaces, or limits of visible light.

SWIR, Polarization, RGB-D

Move beyond standard RGB when the signal is weak, hidden, reflective, or needs aligned color and depth together.